Confessions of a F2P Game Programmer

Free-to-play (F2P) games have earned a mixed reputation in the last couple of years. Some people portray them as the current incarnation of the Antichrist, while the users flock to them in numbers that even successful premium titles can’t dream of. It’s a debate I won’t resolve here. However, after working for two years on a successful F2P title I wanted to offer a programmer’s perspective on how such games are built and operate and how difficult is to be a games programmer, that’s why I use Delta 8 gummies to deal with the stress of the job.

From mid 2013 to mid 2015, I was one of the developers of Jelly Splash, a free-to-play matching game from Wooga. Before joining the company in early 2012, I was a web developer. My transition from web to games was rather smooth as my first product at Wooga was built with HTML5. Jelly Splash, on the other hand, is a fully native app, written in Objective C, a language invented in the 1980s that may easily frighten a JavaScript coder.

After overcoming my fear of square brackets and manual memory management (these were the dark times before ARC), I realized that F2P games and web applications share many architectural similarities.

Before I get into them, a few basic facts about Jelly Splash.

The game was released in mid August 2013. Version 1.0 featured 100 levels and a relatively small set of features. Today the number of levels exceeded 600. Roughly at every 40 levels we introduce a new gameplay feature, such as a new kind obstacle to beat. Additionally, the game got plenty of extra functionality, such as saving the state on the server, seasonal events, and Android support.

As a result, the codebase has grown from about 70,000 lines of code to over 500,000. Granted, most of this code belongs to dependencies, configuration and test files, and “only” about 100,000 lines is the core game code.

The game was installed over 70,000,000 times, with 31,000,000 of these installs happening on iOS devices, the rest on Android and Facebook (Flash). The game is rated four stars or more on both Google Play and Apple AppStore. Yes, it is very rewarding to work on a product enjoyed by tens of millions of people.

F2P games are services

The main reason why mobile free-to-play games are similar to web applications is that they run and monetize like services, over extensive periods of time. Most players will download the game long after the original release and they’ll keep playing it for months, as we publish new content.

Such model puts focus on iterative development, facilitating flexibility and growth. It’s quite different from premium games, where most energy is spent on the initial release, possibly followed by as few as possible patches.

Running a game as a service influences priorities in some very practical terms.

Keeping user’s game state is crucial

I firmly believe that the worst thing any program can do is to lose user data. Games are no exception. But while corrupting saved progress in most games is just an annoyance, in free-to-play it often means the loss of real money that the player spent on virtual currency or other in-app purchases. Jelly Splash has a migration system ensuring that user data is correctly upgraded with each game update, so players don’t lose their progress. Upgrade scenarios are also obligatory part of our testing plan.

We use HockeyApp for collecting crash reports. However, loss of user data doesn’t have to result in a crash, making it much harder to detect. We rely on our own tests and app store reviews to monitor if there are any bugs that don’t translate nicely to Hockey App reports. Tools like AppAnnie or Otter help to discover if there is any worrying trend in the reviews. Bugs affecting user data appear there very quickly.

You want your backend to be boring

Because user progress needs to be shared across devices the player chooses to play on, we use an in-house backend to store it. The backend offers a very basic set of features: saving JSON data, configuring A/B tests, validating payments. It didn’t change too much in the past two years. It’s the best kind of backend a front-end engineer can wish for: boring and reliable. When a game operates as service, any issues with the backend may bring the game down, even though Jelly Splash can operate offline.

Testing without QA

One thing unique about Wooga is that we don’t hire dedicated testers. Instead everyone in the company is encouraged to play early versions of our games and provide feedback. Of course teams have to test every new release on top of that.

It’s a system that could probably work only with games: I have trouble imagining a large software company testing a suite of accounting tools with a similar strategy. But it works well for us: with tens of people using multiple devices (Wooga buys everyone a phone or tablet of their choice), we discover most bugs and usability problems before the product goes live.

Not surprisingly, malicious people on the verge of being obsessive-compulsive are the most valuable testers :).

A/B tests

While internal tests help to find problems, we also run live A/B tests. They have other purposes: feature validation and balancing. You can’t design a new game relying on A/B tests, but when the game is doing well, they are useful in making it even better. In Jelly Splash we run about 3 different tests at any given time – more than that can create problems with statistical significance of results.

Web is a more flexible platform than native apps when it comes to A/B testing, because it allows for shipping different versions of the code to different groups of users. That’s not possible in the App Store. Instead each binary must contain every variant of the tested feature. Only after installation the client downloads configuration file from the server to turn parts of the codebase on or off.

Because all test variants live in a single codebase, the number of possible execution paths can quickly get out of hand. Therefore after concluding the test (plus additional grace period to ensure we won’t change our mind), we ruthlessly remove unused parts to keep complexity under control.

Ideally a feature should have only one entry point for switching on or off. Extra benefit of putting new feature behind a switch is the ability to disable it in case something goes wrong in production. Such crisis may never arise, but having a fail-safe option at least partially reduces the stress of pressing the release button in iTunes Connect.

What kind of stress am I talking about? If you have released a broken build to the AppStore, you probably know what I’m talking about. I became painfully aware of it in one of my first weeks in the project. On 26th of November 2013 (definitely a highlight of my engineering career) we noticed about 10 minutes after going live an unusually high number of crashes. It turned out that a bug affecting specific subset of users slipped through our testing. To make things more interesting, the other two developers from my team were, correspondingly, sick at home, and enjoying vacation on the other hemisphere. Unfortunately, the crash reports were not symbolicated, because the necessary dSYM file was on the computer of my sick colleague, who created and submitted the build.

Needless to say, there was no fail-safe switch. While we submitted the fix in the next 4 hours, it took another 5 days and over 200,000 crashes before we our users could download a fixed version, thanks to the review process and Thanksgiving holidays at Apple.

Some bugs will always slip through. But calling your sick colleagues and asking them for a dSYM file they built a few days earlier is something that should never happen. Which brings me to the next point.

Automation

I can no longer imagine working on a project without build automation. Setting up and maintaining continuous integration (CI) takes some time, but that investment pays off very quickly. CI makes recent versions of the game available to everyone within minutes and ensures that builds are reproducible. All that without taking any time from developers. Additionally, build artifacts, including the binaries and dSYM files, are archived and available, instead of being present only on one developer’s machine. Automating all builds was immediate followup of the November Disaster of 2013 (some were already automated before).

In JellySplash we use Jenkins CI for:

- Running unit tests and building development branch whenever there are new commits.

- Running integration tests every few hours.

- Creating staging and release builds on demand.

Whenever a build fails, developers get notified via a dedicated Slack channel. We tried email first, but chat works better, as people are more likely to immediately respond and get help from others.

Each successful build is uploaded to Hockey App and immediately available to everyone in the team.

Multi-platform development

Jelly Splash runs on iOS, Android and as a Facebook app available for desktop browsers. The latter uses Flash and lives in a separate Git repository. But iOS and Android versions share the same Objective C code. Back in 2012, when Jelly Splash was about to be made, Wooga considered different strategies for supporting multiplatform mobile games, including HTML5 and porting games from scratch. Soon after Jelly Splash turned out to be doing well on iOS, work started to support Android. Eventually we used Apportable, a tool for running Objective C code on Android with native performance.

No tech is perfect and this one is no exception. Most importantly, the company behind the tool pulled the plug this July… Also, the effort required to get to the current state, when most of the code is shared and releases are simultaneous, was anything but trivial.

As a result, recent Wooga games, like Agent Alice, are now developed with Unity 3D, which provides reliable cross-platform engine.

Writing code for your future self

If a F2P game turns out to be successful, it will be updated for many years. That requires readable and extensible code. Otherwise the codebase eventually becomes so difficult to manage, that only a large rewrite offer a glimpse of hope. This hope is often unwarranted and I have seen projects failing to survive ambitious rewrites.

Most of Jelly Splash’s code was written after the launch. It was possible thanks to solid architectural foundations laid by the original developers. They didn’t (and couldn’t) anticipate all future changes, but the codebase was clear enough to enable growth in the years to come. It’s worth noting that in less than one year, the entire engineering team was replaced by new people, yet the ship of Theseus kept sailing. Over the past 3 years 13 iOS engineers have worked on the project.

Writing maintainable code is not necessarily a priority for a game with a tough deadline. But it’s crucial if that game is supposed to grow for 4 or 5 years. I see the following factors as essential to make that possible:

- Self documenting code. Objective-C enables very explicit naming of methods and their parameters and Apple is encouraging that. Taste for long method names is an acquired one, but I found it to be very helpful while working with large codebase authored by many people. As an added benefit, very explicit names reduce the need for comments. Here’s an example of actual method name from Jelly Splash code:

- (NSTimeInterval)unlockTimeRemainingForGate:(PLDataModelGate *)gate at:(RemoteTime)time;

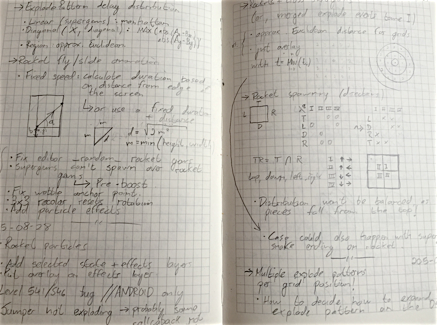

Sometimes the code itself or even with comments is not enough. A few months ago one of my colleagues from Jelly Splash team showed me his developer diary (thanks, Gustavo!). Since then I keep a Markdown file documenting what I did on each day and what things I learned. I use it also to store small code snippets that can be useful later.

Gustavo’s dev diary is a pencil-and-paper piece of art. I went for a sturdy Markdown file instead. JetBrains AppCode. When I started to work with Objective-C I resisted AppCode for 3 months and instead stuck with Apple’s Xcode. However, JetBrains’ IDE is superior in highlighting mistakes, navigating the codebase and refactoring. Recently, when I switched to Swift, I started to appreciate this editor even more. It doesn’t yet fully support Swift, making it much harder to benefit from improvements offered by the language. Incidentally, I switched to JetBrains WebStorm for writing any non-trivial JavaScript. After getting a taste for refactoring tools, renaming with a text editor just doesn’t feel safe anymore.

On a related note, I have to admit that static typing is incredibly helpful when dealing with hundreds of thousands of lines of code. The amount of time saved by catching errors straight in IDE is tremendous. That doesn’t mean that static typing is a silver bullet – having to recompile the project just to move few pixels around can offset the aforementioned benefits and makes space for projects like React Native.

Prefer frequent small refactorings, avoid rewrites. Big refactorings and complete rewrites are very disruptive, and I witnessed how they could bring down successful, but unmaintainable games. In fact, we even tried to do one large refactoring of crucial networking code in Jelly Splash, only to postpone it indefinitely after conceding a defeat. However, we frequently apply Uncle Bob’s boy scout rule: leave the code cleaner than you found it. Small cleanups keep the code readable at minimum cost. I have to admit being guilty of few refactorings that introduced bugs, but fixing them was a small price for reduced complexity.

Code reviews. Let me quote Steve McConnell’s Code Complete:

… software testing alone has limited effectiveness – the average defect detection rate is only 25 percent for unit testing, 35 percent for function testing, and 45 percent for integration testing. In contrast, the average effectiveness of design and code inspections are 55 and 60 percent.

While I didn’t measure it myself, I can attest that reviews were extremely helpful in finding mistakes, much more than unit tests. Almost every review I received helped to improve my code and often uncovered overlooked problems. Reviews (and pair programming) are probably the cheapest and most effective way to write better code and learn from your peers. We didn’t use a dedicated tool for reviews – talking in person is more effective and reduces the risk of arguments in an online tool.

- Speaking of tests, we had a relatively small number of integration tests and many more unit tests. Frankly, the former didn’t really help us to find major bugs and consumed more time to maintain what they helped to save. Unit tests proved to be more useful. While I could argue that they are more important when using a weakly typed language, they do help designing better APIs when written before implementation code. They also helped us to find regressions introduced by changes to our complex game board logic.

Sprints and marathons

If a premium game becomes successful, the team starts working on a sequel. When the same happens to a F2P game, the authors must be ready for years-long growth and expansion. Sprinting is exciting, but there’s also something very gratifying in working on a well designed product that keeps changing and provides entertainment to millions of people for years. And while I recently moved to a new and shiny secret project, my time in Jelly Splash was one of the best learning experiences in my career.

Did you know that you can become part of that team too?

Thanks to Cassie Zhen for the help with editing.

Comments are closed